Reinforcement learning for process design

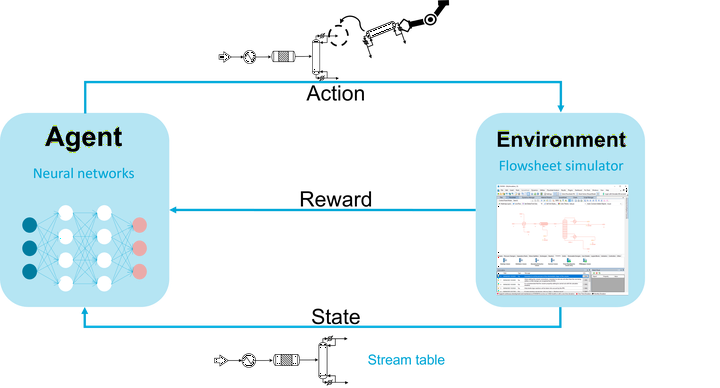

Process synthesis experiences a disruptive transformation accelerated by artificial intelligence. We propose a reinforcement learning algorithm for chemical process design based on a state-of-the-art actor-critic logic. Our proposed algorithm represents chemical processes as graphs and uses graph convolutional neural networks to learn from process graphs. In particular, the graph neural networks are implemented within the agent architecture to process the states and make decisions. We implement a hierarchical and hybrid decision-making process to generate flowsheets, where unit operations are placed iteratively as discrete decisions and corresponding design variables are selected as continuous decisions. We demonstrate the potential of our method to design economically viable flowsheets in an illustrative case study comprising equilibrium reactions, azeotropic separation, and recycles. The results show quick learning in discrete, continuous, and hybrid action spaces. The method is predestined to include large action-state spaces and an interface to process simulators in future research.

Relate publications

- Qinghe Gao et al. “Transfer learning for process design with reinforcement learning”. In: Computer Aided Chemical Engineering. Elsevier, 2023, pp. 2005–2010. doi: 10.1016/b978-0-443-515274-0.50319-x Paper link.

- Gao, Q , & Schweidtmann, A. M. (2023). Deep reinforcement learning for process design: Review and perspective. arXiv preprint arXiv:2308.07822.